Maximiser la vraisemblance, partie 1

Précédemment, nous avons choisi la mean de l’échantillon comme estimation du paramètre de modèle de population mu. Mais comment savoir si la moyenne d’échantillon est le meilleur estimateur ? La question est délicate, procédons donc en deux étapes.

Dans la partie 1, vous allez utiliser une approche computationnelle pour calculer la log-vraisemblance d’une estimation donnée. Puis, dans la partie 2, nous verrons qu’en calculant la log-vraisemblance pour de nombreuses valeurs possibles de l’estimation, une valeur donnera la vraisemblance maximale.

Cet exercice fait partie du cours

Introduction à la modélisation linéaire en Python

Instructions

- Calculez les méthodes

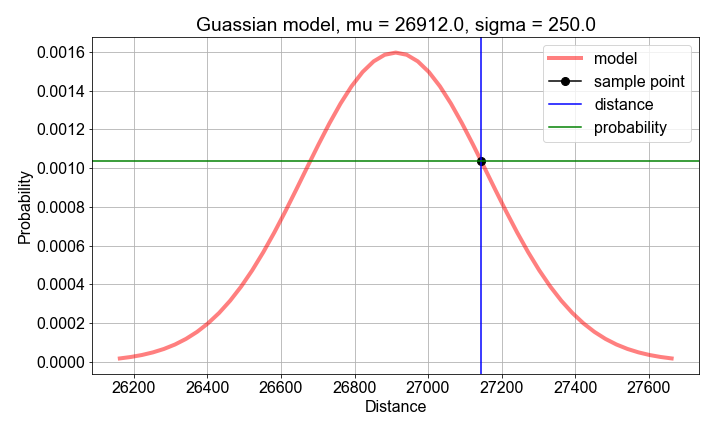

mean()etstd()sursample_distances(préchargé) pour obtenir les valeurs supposées des paramètres du modèle probabiliste. - Calculez la probabilité pour chaque

distanceen utilisantgaussian_model()construit à partir desample_meanetsample_stdev. - Calculez la

loglikelihoodcomme lasum()deslog()des probabilitésprobs.

Exercice interactif pratique

Essayez cet exercice en complétant cet exemple de code.

# Compute sample mean and stdev, for use as model parameter value guesses

mu_guess = np.____(sample_distances)

sigma_guess = np.____(sample_distances)

# For each sample distance, compute the probability modeled by the parameter guesses

probs = np.zeros(len(sample_distances))

for n, distance in enumerate(sample_distances):

probs[n] = gaussian_model(____, mu=____, sigma=____)

# Compute and print the log-likelihood as the sum() of the log() of the probabilities

loglikelihood = np.____(np.____(probs))

print('For guesses mu={:0.2f} and sigma={:0.2f}, the loglikelihood={:0.2f}'.format(mu_guess, sigma_guess, ____))