Appliquer le Double Q-learning

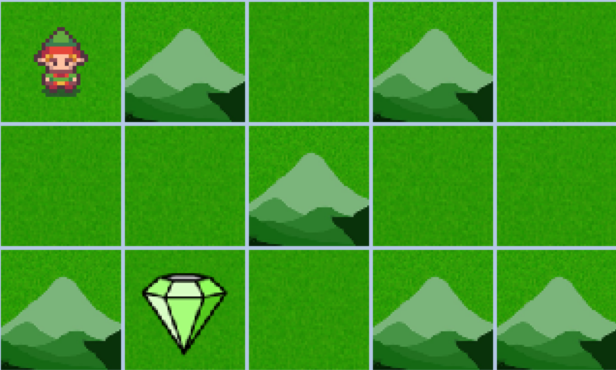

Dans cet exercice, vous allez appliquer l’algorithme de Double Q-learning dans le même environnement personnalisé que vous avez résolu avec Expected SARSA, afin de comparer les deux approches. Le Double Q-learning, en utilisant deux tables Q, contribue à réduire le biais de surestimation inhérent à l’algorithme de Q-learning classique et offre une plus grande stabilité d’apprentissage que d’autres méthodes à différence temporelle. Vous utiliserez cette méthode pour vous déplacer dans la grille, viser la meilleure récompense et éviter les montagnes afin d’atteindre l’objectif le plus rapidement possible.

Cet exercice fait partie du cours

Reinforcement Learning avec Gymnasium en Python

Instructions

- Mettez à jour les tables Q à l’aide de la fonction

update_q_tables()que vous avez codée à l’exercice précédent. - Combinez les tables Q en les additionnant.

Exercice interactif pratique

Essayez cet exercice en complétant cet exemple de code.

Q = [np.zeros((num_states, num_actions))] * 2

for episode in range(num_episodes):

state, info = env.reset()

terminated = False

while not terminated:

action = np.random.choice(num_actions)

next_state, reward, terminated, truncated, info = env.step(action)

# Update the Q-tables

____

state = next_state

# Combine the learned Q-tables

Q = ____

policy = {state: np.argmax(Q[state]) for state in range(num_states)}

render_policy(policy)