Actor Critic loss calculations

As a final step before you can train your agent with A2C, write a calculate_losses() function which returns the losses for both networks.

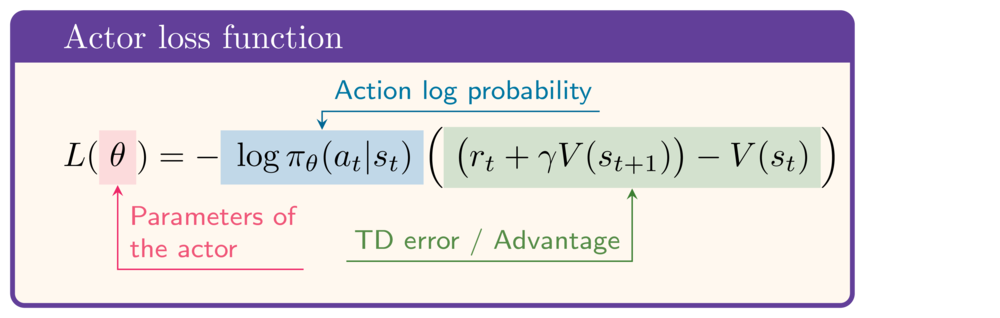

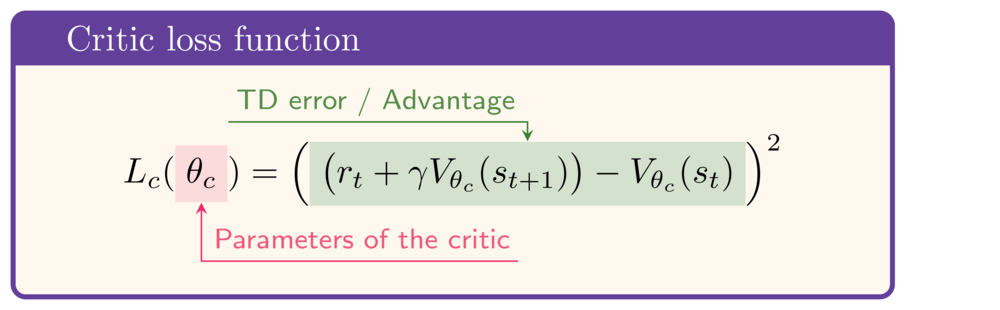

For reference, these are the expressions for the actor and critic loss functions respectively:

This exercise is part of the course

Deep Reinforcement Learning in Python

Exercise instructions

- Calculate the TD target.

- Calculate the loss for the Actor network.

- Calculate the loss for the Critic network.

Hands-on interactive exercise

Have a go at this exercise by completing this sample code.

def calculate_losses(critic_network, action_log_prob,

reward, state, next_state, done):

value = critic_network(state)

next_value = critic_network(next_state)

# Calculate the TD target

td_target = (____ + gamma * ____ * (1-done))

td_error = td_target - value

# Calculate the actor loss

actor_loss = -____ * ____.detach()

# Calculate the critic loss

critic_loss = ____

return actor_loss, critic_loss

actor_loss, critic_loss = calculate_losses(

critic_network, action_log_prob,

reward, state, next_state, done

)

print(round(actor_loss.item(), 2), round(critic_loss.item(), 2))