Barebone DQN loss function

With the select_action() function now ready, you are just one final step short of being able to train your agent: you will now implement calculate_loss().

The calculate_loss() returns the network loss for any given step of the episode.

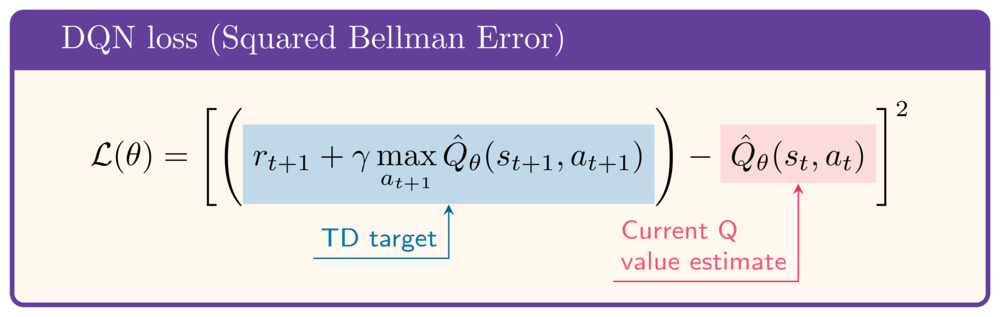

For reference, the loss is given by:

The following example data has been loaded in the exercise:

state = torch.rand(8)

next_state = torch.rand(8)

action = select_action(q_network, state)

reward = 1

gamma = .99

done = False

This exercise is part of the course

Deep Reinforcement Learning in Python

Exercise instructions

- Obtain the current state Q-value.

- Obtain the next state Q-value.

- Calculate the target Q-value, or TD-target.

- Calculate the loss function, i.e. the squared Bellman Error.

Hands-on interactive exercise

Have a go at this exercise by completing this sample code.

def calculate_loss(q_network, state, action, next_state, reward, done):

q_values = q_network(state)

print(f'Q-values: {q_values}')

# Obtain the current state Q-value

current_state_q_value = q_values[____]

print(f'Current state Q-value: {current_state_q_value:.2f}')

# Obtain the next state Q-value

next_state_q_value = q_network(next_state).____

print(f'Next state Q-value: {next_state_q_value:.2f}')

# Calculate the target Q-value

target_q_value = ____ + gamma * ____ * (1-done)

print(f'Target Q-value: {target_q_value:.2f}')

# Obtain the loss

loss = nn.MSELoss()(____, ____)

print(f'Loss: {loss:.2f}')

return loss

calculate_loss(q_network, state, action, next_state, reward, done)